- Blog

- Stalker kill zombie quest

- Boycold music tag

- Night book game

- Monthly household budget template

- Twistedwave for pc

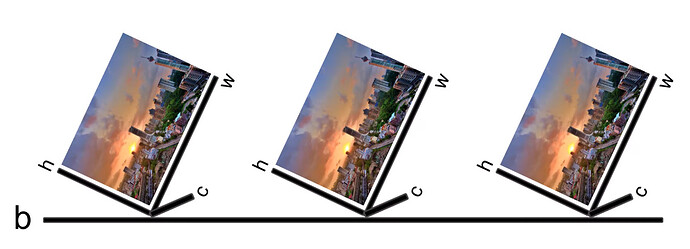

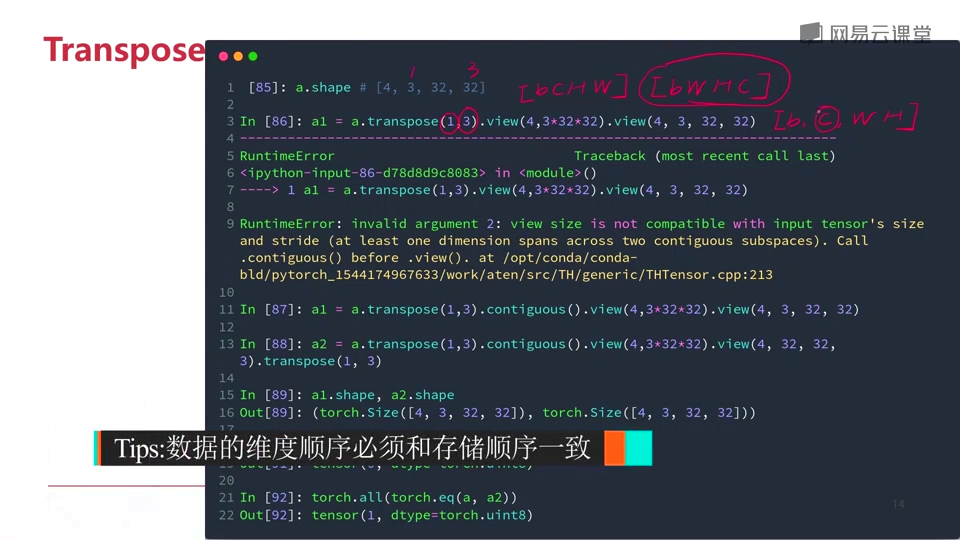

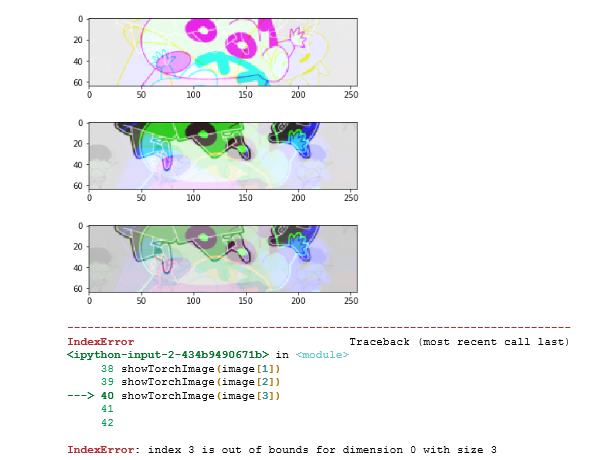

- Torch permute

- Royal order of jesters san antonio

- Jurassic world evolution 2 wiki

- Chivalry 2 beta end time

- Atom rpg trudograd locations

- Bookwright image resolution

- Young women in eastern star order

- 128x128 image converter

In this case I think permute, as proposed, would do exactly what a user expects: return the specified permutation of the array.

TORCH PERMUTE HOW TO

In this tutorial, we will introduce you how to fix it. If you use with torch.no_grad() then you disallow any possible back-propagation from the perceptual loss. When we are building ai model using pytorch, we may get this error: AttributeError: module ‘torch’ has no attribute ‘permute’.

TORCH PERMUTE UPDATE

If this is true, and it is used in forward pass of VGG perceptual loss, what for are you computing the loss? The purpose behind computing loss is to get the gradients to update model parameters. My understanding of with torch.no_grad() is that it completely switches off the autograd mechanism. I think doing this will be a big blunder. The training time is much slower and batch size is much smaller compared to training without perceptual loss.

TORCH PERMUTE CODE

I use your code to compute perceptual loss. Hi, do you need to add "with torch.no_grad()" before computing vgg feature? I think it can reduce memory usage.

transform( target, mode = 'bilinear', size =( 224, 224), align_corners = False)įor i, block in enumerate( self. transform( input, mode = 'bilinear', size =( 224, 224), align_corners = False)

view( 1, 3, 1, 1))ĭef forward( self, input, target, feature_layers =, style_layers =):